This is the third post in a series. If you started here, you might want Part 1 for the overview of what California and Michigan said about i-Ready, and Part 2 for the line-by-line of Michigan’s review. This one is the personal one. The one where I tell you what i-Ready missed for my own kid, and what I wish I had known a year and a half earlier.

The thing they don’t explain in the parent letter

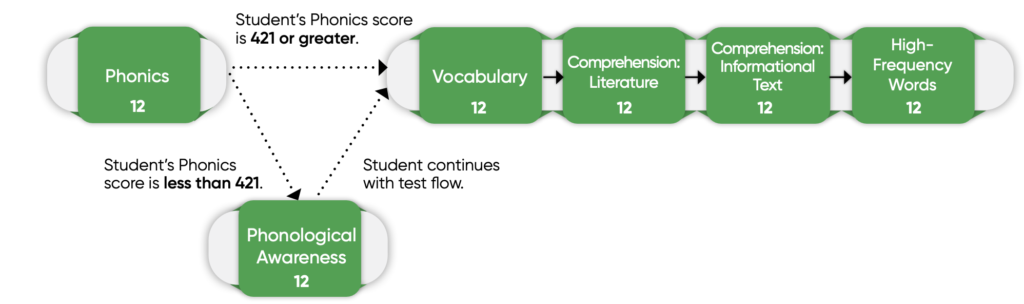

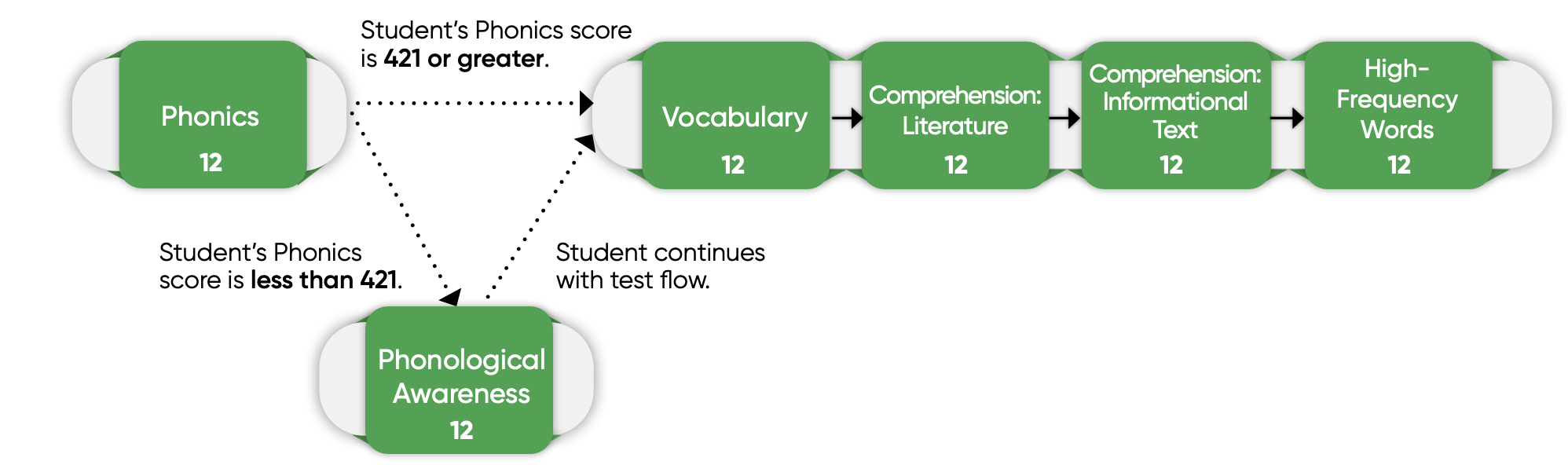

Here is something that is not explained well in any of the parent-facing i-Ready material: if a student performs “well enough” in one area, the test may skip assessing an underlying related skill entirely.

That’s how computer-adaptive testing works. For a diagnostic, that is fine. The point of a diagnostic is to figure out what to teach next, and you don’t need to make a kid grind through items they have already mastered.

But for a screener, it can be catastrophic. The whole point of a universal screener is to ask every kid every relevant question, so that the kids whose gaps live somewhere unexpected are still caught. As soon as the test starts skipping domains, it has stopped being a screener.

Schools use i-Ready as a screener anyway. So it matters to know exactly where the test draws the line that adapts away from phonological awareness questions.

The score where it happens

The precise answer is a Phonics scaled score of 421. Curriculum Associates publishes this in their own internal documentation. Once a student crosses 421 on Phonics, the algorithm will adapt them out of phonological awareness items.

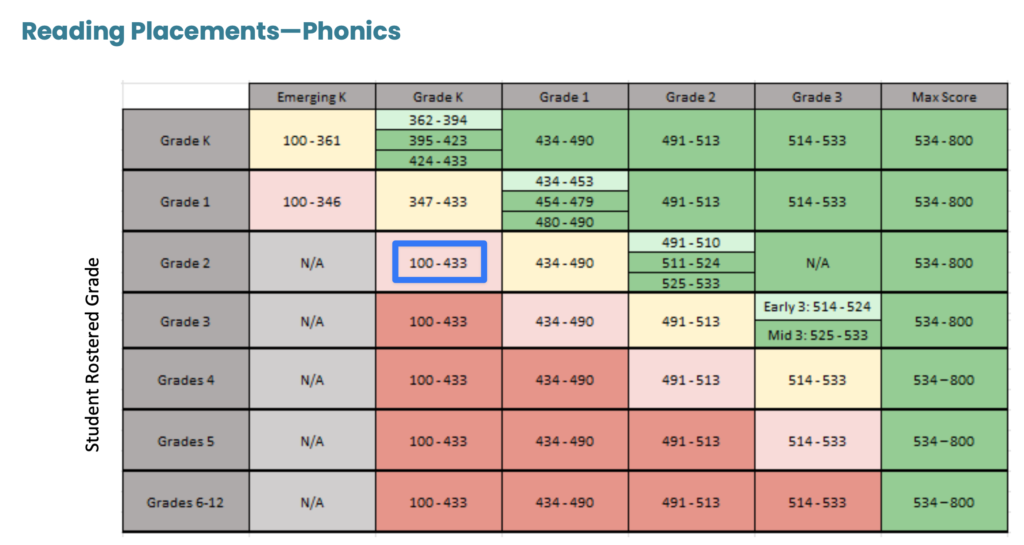

When I first saw this, I assumed 421 was on grade level for a second grader. After all, that’s the grade where most kids are taking i-Ready as a screener.

I was wrong.

A Phonics scaled score of 421 lands roughly mid-band for kindergarten on Curriculum Associates’ own published placement tables. That means a second grader who scores at a kindergarten level on Phonics has just been routed past the assessment items that measure the underlying sound-manipulation skills that predict reading difficulty.

A generous reading puts that at one full grade level below. A more honest one puts it at two or more.

In other words: Curriculum Associates’ algorithm has decided that if your second grader has the phonics skills of a kindergartener, the test does not need to measure their phonological awareness. At that point, the screener is not identifying risk. It is filtering it out.

What you actually get vs. what you need

Most parents never see the data they need. What you get is a label. “On Track.” “Early.” “Mid.” “Late.” Sometimes a percentile. Maybe a colored band on a dashboard.

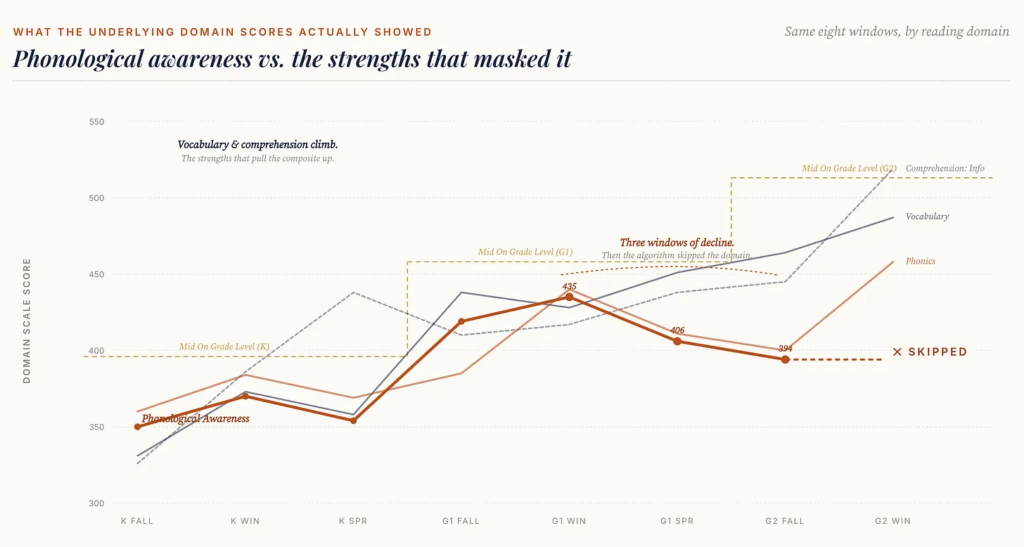

Those labels are built on composite scores. And composites can hide real problems. A kid can compensate with vocabulary or memory while struggling with decoding or phonological awareness. The average looks fine. The profile is not. This is especially true for twice-exceptional kids, the ones whose verbal scores carry the composite while their foundational reading skills quietly slide.

If you want to understand what is actually going on, you need to ask for:

- The full assessment report, not the parent summary

- Subtest or domain-level scores, not labels

- The scoring tables the school uses to interpret results

In my school, this was not offered. I had to request it. You have a right to the data. It’s called FERPA. Sweetie, look it up. (All jokes aside, the U.S. Department of Education’s FERPA guidance confirms that parents have the right to inspect and review their child’s educational records, and assessment data falls inside that.) Until proven otherwise, assume yours will not be offered either, and ask now.

What to look for once you have the data

Once the actual report is in your hands, focus on patterns, not headline numbers.

Are foundational skills weaker than higher-level ones? A kid who is strong on vocabulary and comprehension but weak on phonological awareness or phonics is the textbook profile of a kid headed for trouble, even if the composite looks fine. The International Dyslexia Association has put this profile front and center in their parent resources for a reason.

Are there large gaps between domains? A 70-percentile gap between vocabulary and phonics is not “balance.” It is a flag.

Are scores clustered just above intervention cutoffs? This last one is the group I would watch hardest. The kids who score consistently in the high 30s and low 40s are the ones most likely to be missed. Screeners are supposed to catch them. As we saw in Part 2, the Michigan review found that i-Ready specifically did not provide accuracy evidence for that band.

And don’t stop after one report. Make a spreadsheet. Get some graph paper. Track this stuff over time. The school and i-Ready are not your backstops. You are.

A real-world example (mine)

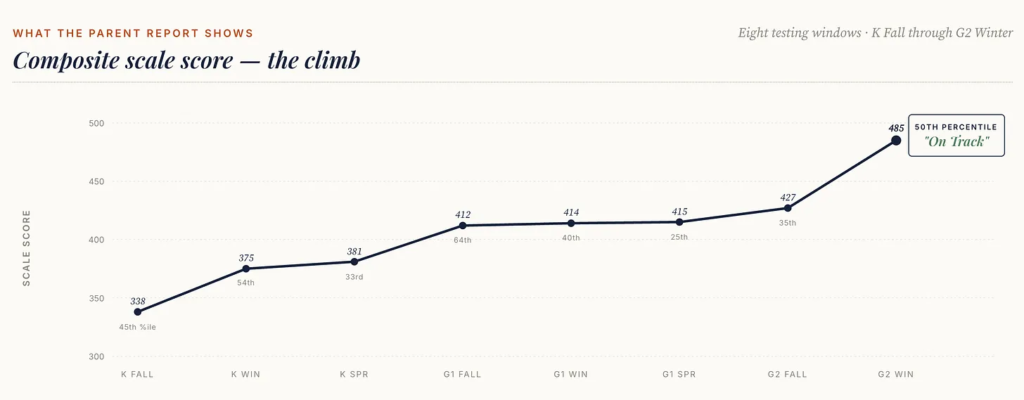

After a year of flat reading growth (we still didn’t qualify for intervention; we were sitting at the 25th percentile), I requested a special education evaluation and hired a private tutor. Things felt off. I didn’t have the language for what was wrong. I just knew it was wrong.

We saw some recovery. I didn’t know what I didn’t know.

What was actually happening: the things we were focusing on (more stories, more words, more reading aloud at bedtime) effectively masked the worsening struggle in the foundational areas. This is the danger of only looking at a composite. The house looks fine. It is being built on sand.

As an added bonus, skipping an entire domain (so the denominator becomes five instead of six) and effectively dropping the lowest score gives the composite a healthy boost.

When I finally saw my son’s domain-level data, I could math out what the missing section was hiding. Skipping phonological awareness pulled his composite up by about 15 percentage points. Had he been given the phonological awareness section and scored in line with his prior-year results, his composite would have placed him around the 35th percentile.

His composite, as reported, put him at the 50th. Problem solved? No. Problem hidden.

Looking at that slope still guts me. I’m not a public person, but this is the part I keep saying out loud anyway, because I’m not the only mom looking at one of these reports right now and wondering whether to push. I am not ashamed that he struggled. Struggle is where growth comes from. What I am ashamed of is that I didn’t know, and that I didn’t intervene sooner. If one family doesn’t have to live this slope because of this post, it is worth it.

Timing is everything

Early intervention works. Late intervention is harder, longer, and less predictable. Decades of research, summarized cleanly by Reading Rockets, keep landing on the same finding: the foundational skills (decoding, phonemic awareness, letter-sound correspondence) are far easier to build in kindergarten and first grade than later. A delay in identifying gaps has compounding effects.

There is no amount of will or effort you can add in the fall of second grade that makes up for what was missed in the fall of first. I have tried. Trust me.

Bottom line

No single assessment tells the whole story. To be fair, that is probably too much to ask of any one tool. But if a tool is being used to make decisions about whether your kid gets reading intervention, the difference between “measured” and “assumed” can change the trajectory of an entire childhood.

Please don’t trust i-Ready with that decision.

Your action step this week

Send this email today, and put a calendar reminder for the 45-day FERPA response window:

“Hi [Teacher / Special Ed Coordinator], under FERPA I’d like to request a copy of [child’s name]’s complete reading screener record for this school year, including all subtest and domain-level scores, the report on which sections of the adaptive assessment were administered (and which were skipped), and the scoring tables the district uses to determine cut scores. I’d also like a copy of the district’s policy on which screener results trigger diagnostic follow-up. Thanks so much.”

Then, when you get the data, look at the phonological awareness line first. If it is missing entirely, you now know exactly why. And you have somewhere to start.

This is the last post in the series. If you missed the earlier ones:

- Part 1: Two States Just Said i-Ready Doesn’t Cut It as a Reading Screener

- Part 2: i-Ready Scored 50% in Michigan’s K-3 Screener Review. Here’s the Line-by-Line.

Part of the Tests hub. For parent-friendly framing of how testing works in special education and what to push back on, see What You Need to Know About Tests.

Leave a Reply