Your kid took the test. The school sent home a number. The number says 85. The eligibility cutoff is 85. The school says, “Doesn’t qualify.”

You stare at that 85 and the 85 next to it and the room goes quiet. The math feels final.

Here is what nobody told you in that meeting: that 85 is not a number. It is a range. And depending on the test, the real number could be anywhere from 81 to 89. The score the school wrote down is a best guess, not a fact. Every standardized test in the country comes with a margin of error built into it, published in its own manual, taught to school psychologists in graduate school. And in the meeting where it would have changed your kid’s life, no one said the words.

This post is about that margin of error. What it is, why it exists, why it matters most at the exact place where eligibility is decided, and what schools say to keep it out of the conversation.

The thing the test manual already tells you

Every test you have ever heard of, every WISC, every WIAT, every CTOPP, every CELF, comes with a number called the standard error of measurement. It is published in the test’s manual. It is required by the publishing standards every test must meet. It is not optional, theoretical, or controversial.

What it tells you is simple. If you gave the same kid the same test five times in a row and somehow erased their memory of it between sittings, the scores would not be identical. They would cluster around a true score, but each individual administration would produce a slightly different number. The standard error of measurement is the math that describes how wide that cluster is.

A WISC-V Full Scale IQ has a standard error of measurement of about 2.5 points. A subtest score has more. A reading composite from a screener can have an SEM as wide as 5 to 7 points depending on which subtests are included. The wider the SEM, the less you should trust any single administration of the test.

When a test report gives you a score and a 95% confidence interval, what you are looking at is the SEM converted into a range. A score of 85 with a 95% CI of 80 to 90 means: based on the math of this test, we are 95% confident the kid’s true score sits somewhere between 80 and 90. The 85 is just the point in the middle. The number you should pay attention to is the band.

Why this matters at the cutoff

Most of the time, confidence intervals are interesting but not decisive. If your kid scored 110 and the CI is 105 to 115, you are above average and you stay above average no matter where in the band the true score lives. The CI is a footnote.

The math gets sharp at the eligibility cutoff. That is where the band crosses the line.

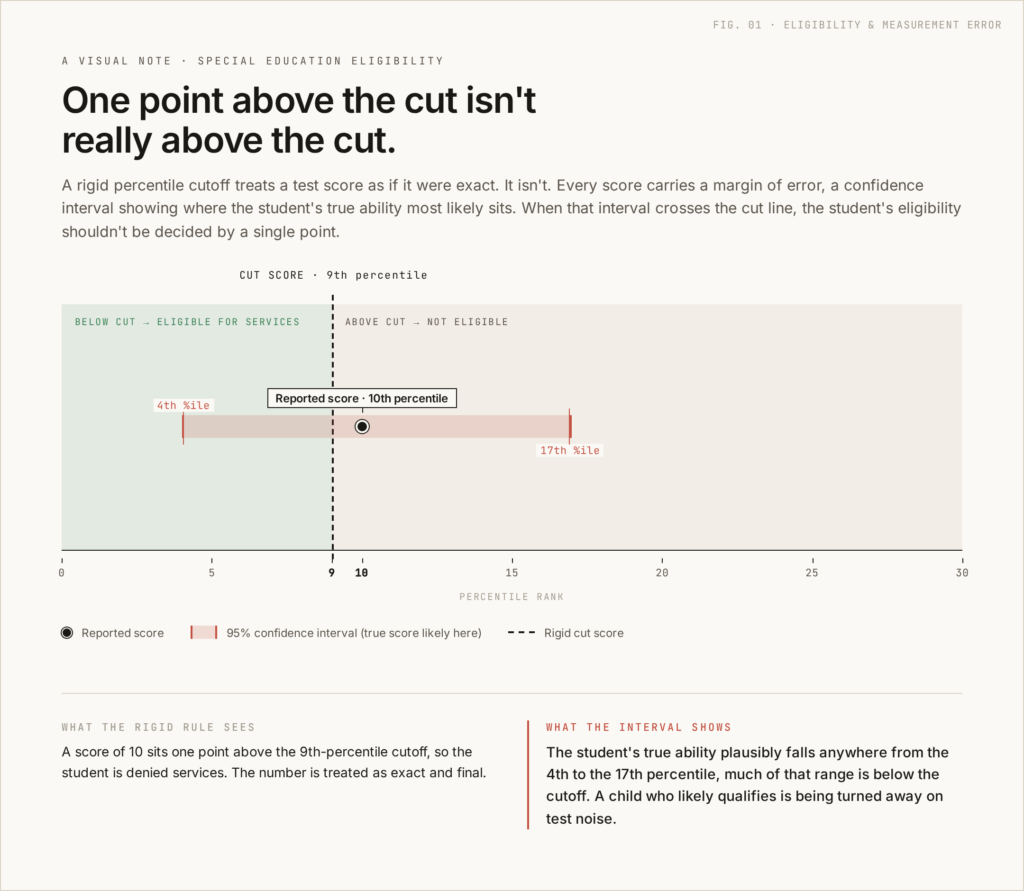

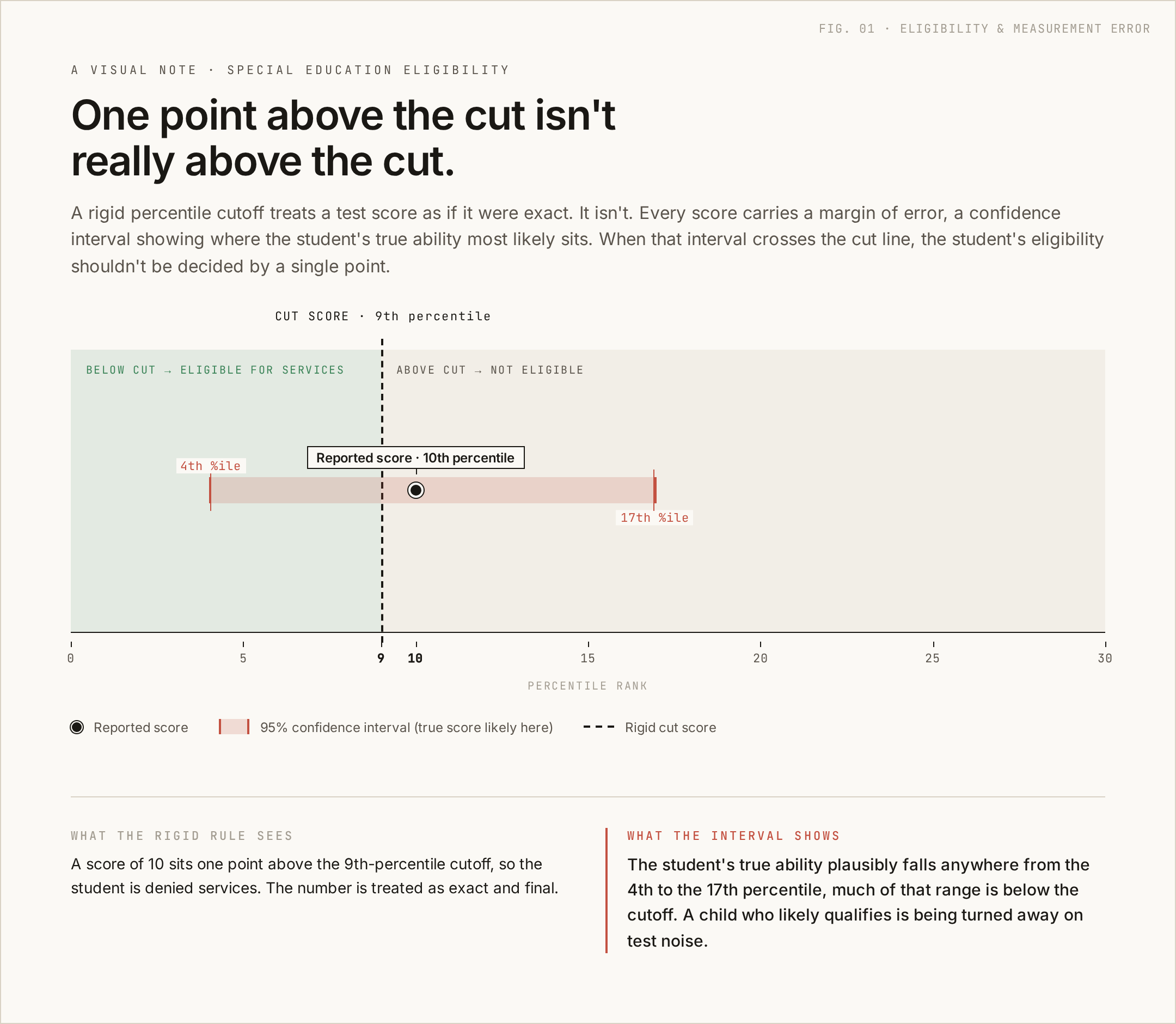

Imagine the cutoff for SLD eligibility in your state is the 9th percentile. Your kid took the WJ Reading Fluency subtest and scored at the 10th percentile. The school says doesn’t qualify. The number is one percentile above the line.

But pull up the test manual. The WJ Reading Fluency at age 8 has a standard error of about 4 percentile points at that range of scores. The 95% confidence interval on a 10th percentile score is roughly the 6th to 14th percentile. The true score might be at the 6th. The true score might be at the 14th. The single point estimate of 10 sits in the middle of a band that crosses the eligibility line in both directions.

When the band crosses the cutoff, the school has not actually established that the kid is above the line. They have established that one specific administration of one specific test produced a number that landed slightly above the line. That is not the same thing.

This is where confidence intervals stop being a footnote and start being the entire conversation.

“We don’t use confidence intervals.”

This is the line. It is delivered casually, in the same flat tone as “we’ll send you the draft to review” or “we ran out of time.” It sounds like a procedural detail. It is not.

What the school is telling you, when they say they don’t use confidence intervals, is that they treat scores at face value. A score of 85 is exactly 85, treated as if it were a measured fact rather than the noisy approximation the test publisher itself describes it as.

Every state and regional eligibility guideline I have read includes language about the standard error of measurement. Some name it explicitly. Some refer to it indirectly by warning teams not to apply rigid numerical cutoffs. The federal regulations under IDEA require schools to use technically sound instruments. Treating the SEM as if it does not exist is not technically sound. It is technically wrong.

If the team at your meeting says they do not use confidence intervals, what you are watching is one of two things. Either the school psychologist running the eligibility analysis has not been trained well, in which case the analysis is incomplete, or they have been trained and the policy is to ignore the math when it is inconvenient. Both of those facts belong in the meeting record.

What to say back

You do not need to lecture anyone. You need to ask three questions in a calm voice and let the answers create the record.

“Can you tell me the standard error of measurement for this test? I’d like to understand the confidence interval around this score.”

If the answer is “we don’t use confidence intervals” ask if they will document that position in the Prior Written Notice. They are required under 34 CFR 300.503 to issue PWN whenever the team proposes or refuses to change eligibility. Forcing the position into writing is what makes it reviewable.

“The score is one percentile above the cutoff. What is the SEM for this subtest at this age, and does the confidence interval cross the cutoff?”

This question is a math question. There is a right answer printed in the test manual. If the school psychologist cannot answer it on the spot, ask for the answer in writing within ten school days. They have it. They are required to use it.

“Does the district’s published eligibility guidance say anything about how to interpret scores near the cutoff?”

You are asking this because most district guidelines do address this exact situation, and most of them say teams should not apply rigid cutoffs near the SEM. If the meeting is operating contrary to the district’s own published guidance, you have the easiest possible argument. Hold them to their own standards.

The smaller question they hope you don’t ask

There is one more thing worth knowing. The math of confidence intervals cuts both ways. A kid who scored at the 8th percentile with a CI of 4 to 12 might also have a true score above the cutoff. The school could just as easily have qualified a kid whose true performance does not warrant it.

The honest answer is that scores near the cutoff are a coin flip wrapped in a band. The right response is not to mechanically apply the cutoff. It is to look at the rest of the data. Other subtests. Classroom observation. Outside evaluations. Trend data over time. The full picture of how the kid functions in the building.

When schools say “the cutoff is the cutoff” they are skipping the hard work of the analysis. The federal regulation that requires multiple measures (34 CFR 300.304(b)(2)) exists because no single score is reliable enough to determine eligibility on its own. The SEM is the mathematical reason that rule exists.

What to do this week

If your kid is anywhere near a cutoff, do three things before the next meeting.

One, find the test manual or look up the SEM for the specific subtest online. Many publishers post this information in technical documentation. The subtests in WISC-V, WJ-IV, KTEA-3, CTOPP-2, and CELF-5 are all well-documented.

Two, calculate or look up the 95% confidence interval for the score in question. If you want to skip the math, plug it into the Confidence Interval Calculator and it will show you the band visually. Take the result with you to the meeting.

Three, write down the three questions above. Read them off your notes if you need to. The questions are short, calm, and impossible to dismiss. They put the math back where it belongs.

The 85 your kid scored is not the score. The score is a band. The cutoff at 85 does not separate the kid from services. It separates the school from doing the work to look at the rest of the data.

Make them do the work.

Part of the IEP guide hub. For the bigger picture on what your child’s IEP can include, see the complete guide to related services in the IEP.

Part of the Tests hub. For parent-friendly framing of how testing works in special education and what to push back on, see What You Need to Know About Tests.

About Decoding Mom

Decoding Mom is written by a mom of a bright kid with ADHD and mild dyslexia. After too many late-night research binges trying to make phonics fun, she started this site to translate the science of reading, IEPs, and special-ed assessments for parents figuring it out the hard way. Honest, parent-first, no fluff. More about her here →

Leave a Reply