When the school sends home a reading score, the implied message is simple. The test was given. The number is the answer. If your kid is On Track, you can stop worrying.

That message would only be true if reading screeners worked the way most parents assume they do. They do not. The framework you actually need to evaluate a screener is not from the world of education research. It is from the world of medical diagnostics.

I spent a few hours reading through the actual research literature on reading screeners after my own kid kept getting reassuring scores that did not match what I was seeing at home. What I found is that there are two specific numbers that tell you whether a screener actually does what it claims to do. Doctors look at these numbers when they evaluate any diagnostic test. Almost no parent is shown them. Once you understand them, you can ask the school questions you could not ask before.

The Two Questions Every Screener Has To Answer

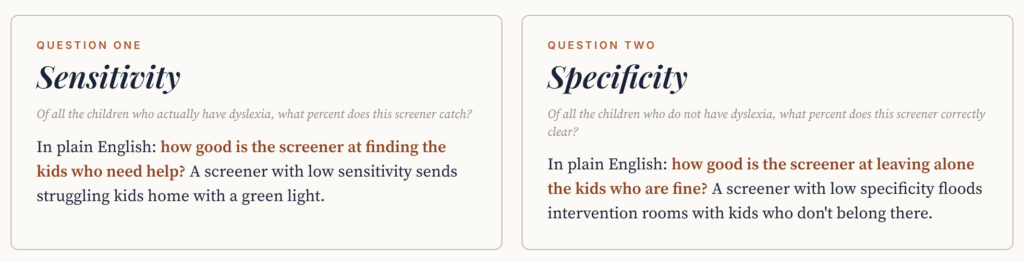

Every screener, whether it is testing for cancer or for reading difficulty, has to answer two questions:

Sensitivity: of all the kids who actually have the condition, what percent does this test catch?

Specificity: of all the kids who do not have the condition, what percent does this test correctly clear?

Those are two completely different numbers. A test can be very good at one and bad at the other. A test can also be terrible at one and the marketing materials will still call it “accurate.”

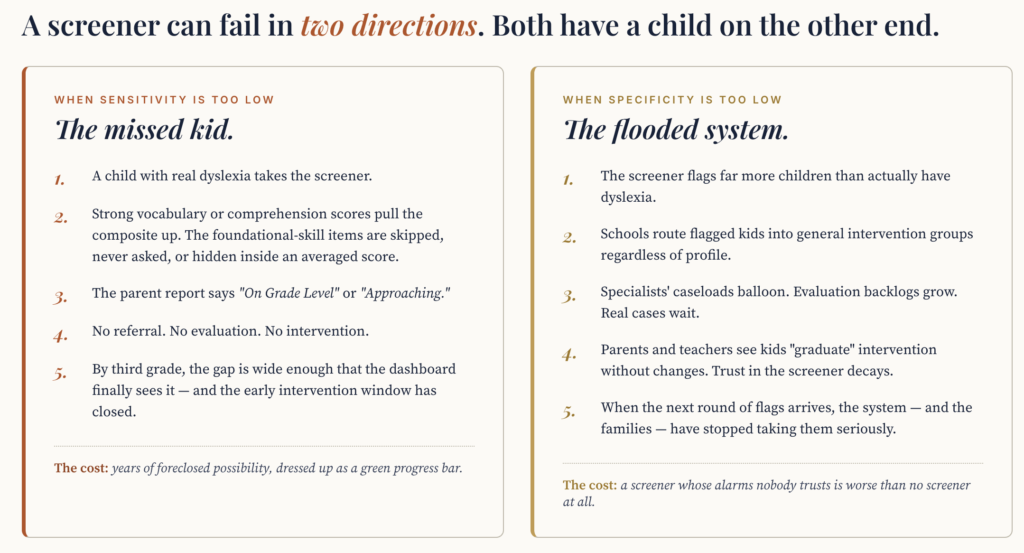

Sensitivity is the number that matters most for catching problems. A screener with low sensitivity sends struggling kids home with a green light. The kid had the condition. The test did not see it. The kid is now in a system that thinks they are fine.

Specificity is the number that matters for not over-flagging. A screener with low specificity floods intervention rooms with kids who do not actually need to be there. The kids get pulled into groups, the specialists’ caseloads balloon, and trust in the screener decays.

For a screener that is looking for dyslexia or other reading difficulties, sensitivity matters way more than specificity. The asymmetry is brutal.

A False Positive Is a Phone Call. A False Negative Is a Childhood.

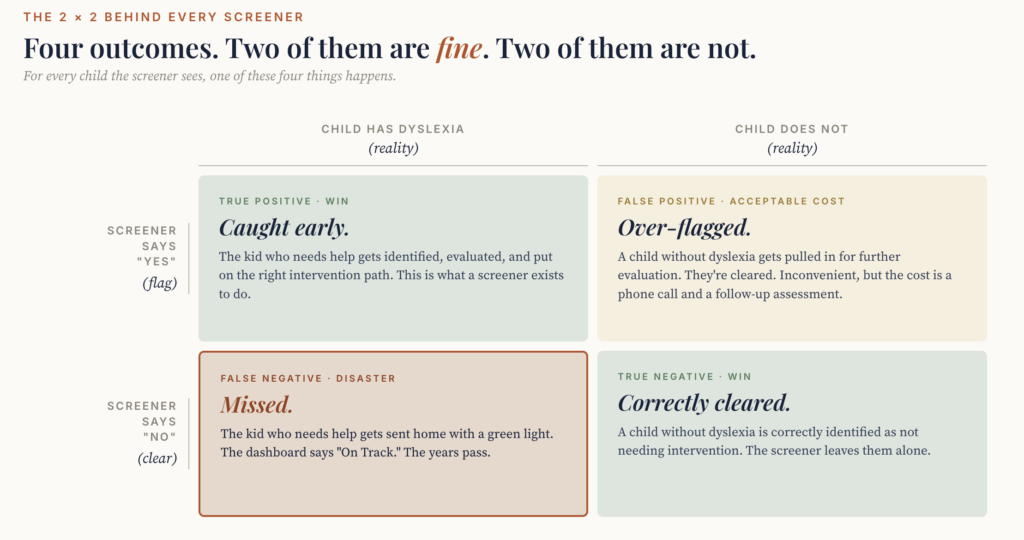

Here is the four-quadrant chart that lives behind every screener. It is the framework doctors use, and it is the framework you should be using too.

Two of the four quadrants are wins:

- True Positive (Caught early): the kid has the condition, the test caught it, the kid gets help. This is what a screener exists to do.

- True Negative (Correctly cleared): the kid does not have the condition, the test correctly cleared them, everyone moves on.

Two of the four quadrants are not wins:

- False Positive (Over-flagged): the kid does not have the condition, but the test flagged them anyway. They get pulled in for further evaluation, get cleared, life goes on. The cost is a phone call and a follow-up assessment.

- False Negative (Missed): the kid has the condition, but the test cleared them. The dashboard says On Track. The years pass.

For a kid who actually has dyslexia, a false negative is not a neutral failure. The research on early intervention windows is unambiguous. Children who are still struggling readers at the end of third grade rarely catch up. Catching the problem in kindergarten or first grade gives the highest chance of full remediation. Catching it in fourth grade gives a much lower chance. Catching it in middle school or later is mostly damage control.

A screener that misses a real case in kindergarten is not just wrong. It is wrong in a way that locks in a worse outcome for the kid for the rest of their school career.

What This Should Drive in Screener Design

Knowing all of this, you might assume the screeners schools use are designed with high sensitivity for the population they are screening. They are not, always.

Some screeners are designed for general academic placement and then marketed as also being useful for catching reading difficulties. Adaptive composites, the kind that average several reading skills into a single placement label, can have surprisingly low sensitivity for kids whose strengths in vocabulary or comprehension mask weakness in foundational skills like phonological awareness or decoding.

When the Michigan Department of Education reviewed the i-Ready Diagnostic in 2025 as a candidate K-3 dyslexia screener, one of the things they specifically asked for was classification accuracy data at cut scores in the 30th to 40th percentile range. That is the band where at-risk readers live: kids who have not yet failed but are showing early signs of difficulty. The vendor submitted accuracy data based on cut scores between the 10th and 20th percentile instead, the band where reading failure is already obvious. Sensitivity at the cut scores Michigan asked for was not provided.

That is one of several reasons Michigan rejected i-Ready as a dyslexia screener.

What to Ask the School

You do not need to memorize the technical definitions. You need to know enough to ask three specific questions about whatever screener your kid’s school uses:

What is the published sensitivity of this screener for the population it is being used to screen? This is the most important question. If the school cannot answer it, ask them to find out and follow up. If the vendor does not publish the number, that itself is information.

At what percentile cut score is the sensitivity number reported? Sensitivity reported at the 10th percentile cut score (severe difficulty) is much less useful than sensitivity reported at the 30th to 40th percentile (early at-risk). The first one tells you the screener catches kids who are already failing. The second tells you it catches kids in time to do something about it.

Is this screener on the National Center on Intensive Intervention’s screening tools chart? NCII is the federal body that independently evaluates screeners using a standardized framework. If the screener your school uses is on the chart, you can look up its actual technical ratings yourself. If it is not on the chart, that is also information.

Why Doctors Talk About These Numbers and Schools Do Not

Here is the honest answer. Schools do not talk about sensitivity and specificity because the people writing the parent letters were not trained in psychometrics. The teachers, the IEP coordinators, the principals: they are working with the information the vendor’s marketing materials give them, which usually emphasizes the design’s strengths and skips the technical adequacy data.

The information exists. It is published in vendor technical manuals, in independent reviews, in NCII’s tools chart, in state evaluations like Michigan’s. It is just not put in front of parents. The expertise gap is real, on both sides of the meeting.

You can close some of that gap by asking the right questions. The three above are a start.

What to Do This Week

Find out what reading screener your kid’s school uses. The name of the product, the company that makes it, the version. Then look it up on the NCII screening tools chart. Read the technical rating. Note specifically the sensitivity and specificity ratings, and at what cut scores.

If the screener is not on the NCII chart, look up whether your state’s department of education has reviewed it. (If you are in Michigan, the K-12 Literacy and Dyslexia Law page has the approved list. Other states have their own lists, with varying levels of rigor.)

Bring what you find to your next parent-teacher conference. You will know more about the screener than the people administering it. That is not a comfortable feeling. It is also the position you have to be in to advocate for what your kid actually needs.

Part of the Tests hub. For parent-friendly framing of how testing works in special education and what to push back on, see What You Need to Know About Tests.

About Decoding Mom

Decoding Mom is written by a mom of a bright kid with ADHD and mild dyslexia. After too many late-night research binges trying to make phonics fun, she started this site to translate the science of reading, IEPs, and special-ed assessments for parents figuring it out the hard way. Honest, parent-first, no fluff. More about her here →

Leave a Reply